A Simple Framework to Prioritize AI Use Cases

Founders and product teams often generate many AI ideas but struggle to decide what to build first. This framework helps prioritize AI use cases using business impact, implementation effort, and data readiness.

5/8/20244 min read

A Simple Framework to Prioritize AI Use Cases

Most founders and product teams do not struggle because they lack AI ideas. They struggle because they have too many.

In almost every AI strategy conversation, teams can quickly list ten or more possible use cases. Chatbots, recommendation engines, automation workflows, predictive analytics, smart assistants, and internal copilots all sound promising. The problem is not ideation. The problem is deciding what to build first.

A few months later, many of those same teams have still not shipped anything. Not because the ideas were weak, but because there was no structured way to prioritize them.

The real problem: idea overload

AI creates a special kind of execution paralysis. Unlike traditional feature planning, AI initiatives often come with unclear implementation effort, uncertain data quality, and higher delivery risk.

That usually leads teams into one of two traps:

They choose the most exciting idea rather than the most practical one.

They keep discussing options without committing to a first step.

What they need is a simple framework that helps them move from a long list of possibilities to a focused execution plan.

A simple prioritization framework

A practical AI prioritization model should evaluate every use case across three dimensions:

Business impact: Will this improve revenue, reduce cost, increase engagement, or improve retention?

Implementation effort: How complex is the build in terms of engineering, integrations, workflows, and timelines?

Data readiness: Do you already have the data needed, and is it clean, available, and usable?

You can apply this in two simple ways:

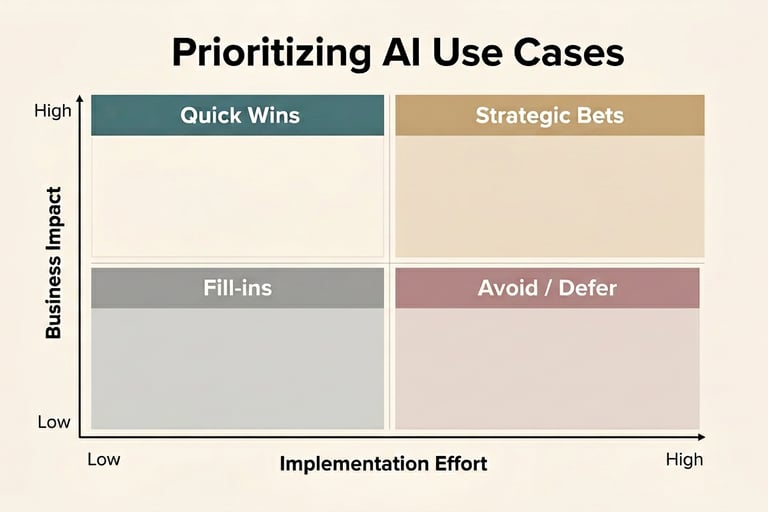

A 2×2 matrix to quickly visualize priorities.

A lightweight scoring model for more structured decision-making.

The 2×2 matrix

Plot each AI use case on two axes:

X-axis: Implementation effort, from low to high

Y-axis: Business impact, from low to high

This gives you four categories:

Quick wins: High impact, low effort

Strategic bets: High impact, high effort

Fill-ins: Low impact, low effort

Avoid or defer: Low impact, high effort

The goal is simple. Build quick wins first, plan strategic bets carefully, and avoid spending energy on ideas that are expensive but unlikely to move the business.

Add data readiness as a third filter

The 2×2 is useful, but AI prioritization becomes much stronger when you also include data readiness.

A use case may look like a quick win on paper, but if the data is incomplete, fragmented, or inaccessible, it is not actually ready. That is why the best near-term AI opportunities are usually the ones with:

Clear business value

Manageable implementation complexity

Strong underlying data availability

A worked example: Smart Scheduler

Imagine you are evaluating AI ideas for a fitness platform or SaaS product. Your list might include:

Smart Scheduler

AI Chat Support

Personalized Recommendations

Predictive Churn Alerts

Now take Smart Scheduler.

Business impact:

A smart scheduling feature can improve engagement, increase adherence, reduce churn, and create upsell opportunities. That gives it high strategic value.

Implementation effort:

It may require calendar integrations, scheduling logic, rules engines, and possibly machine learning. That makes it a medium-to-high effort initiative.

Data readiness:

If you already track usage patterns, attendance, preferences, and time-based behavior, you have a decent starting point. If not, this becomes harder.

In most cases, Smart Scheduler lands in the Strategic Bets quadrant. It has strong upside, but it may not be the first feature to build.

Now compare that with AI Chat Support. If you already have FAQs, help articles, support logs, and a clear workflow, the data readiness is stronger and the build is lighter. That often makes it a better quick win, even if it feels less innovative.

The key insight

Not every high-impact idea should be built first.

Many teams prioritize the feature that sounds the most advanced or impressive. But the smarter path is to prioritize the use case that creates measurable business value fastest.

Early wins matter because they build momentum. They create stakeholder confidence, generate learning, and improve the data foundation for more advanced AI features later.

Why this matters for product strategy

AI prioritization is not just a backlog exercise. It is a product strategy decision.

The strongest AI roadmaps usually follow a progression:

Start with features that organize or surface existing data.

Move into prediction, recommendations, and decision support.

Then expand into automation and autonomous workflows.

This sequence reduces risk and increases the chance that each AI investment builds on the last one.

Common mistakes teams make

Here are the patterns I see most often:

Starting with a complex AI idea before validating data readiness

Underestimating integration and workflow complexity

Choosing use cases based on hype instead of business ROI

Trying to pursue too many AI initiatives at once

A simple framework helps avoid all four.

Where teams usually get stuck

Even with a framework, teams often need support to answer practical questions:

Which use case actually ties closest to a business metric?

How much implementation effort is realistic?

What data gaps need to be fixed first?

Which opportunity should be shipped now versus planned for later?

That is where structured product strategy and AI roadmapping become valuable.

Need help prioritizing your AI use cases?

If your team has a growing list of AI ideas but no clear execution path, I help founders and product teams:

Evaluate and score AI use cases

Identify quick wins versus strategic bets

Build AI roadmaps aligned to business outcomes

Translate ideas into practical product plans

The goal is not to discuss AI endlessly. The goal is to pick the right use case, sequence it correctly, and ship value faster.

If you are evaluating multiple AI opportunities and need clarity on what to build first, Hatwartech can help you prioritize use cases, assess data readiness, and create a practical AI roadmap tied to business outcomes.